AI Research & Prototypes

Exploring new creative paradigms through AI, real-time systems, and speculative design

Featured Prototypes

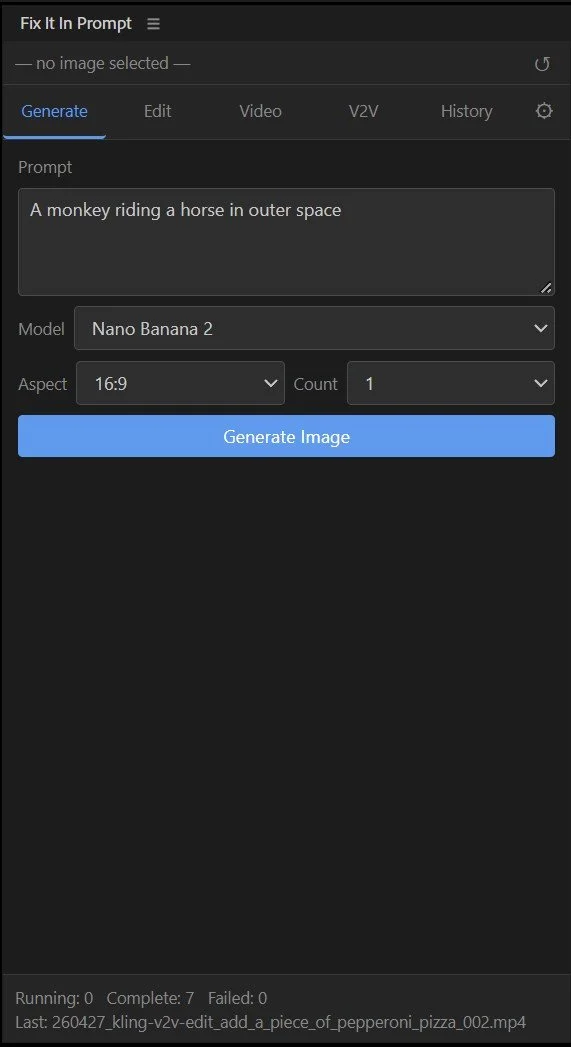

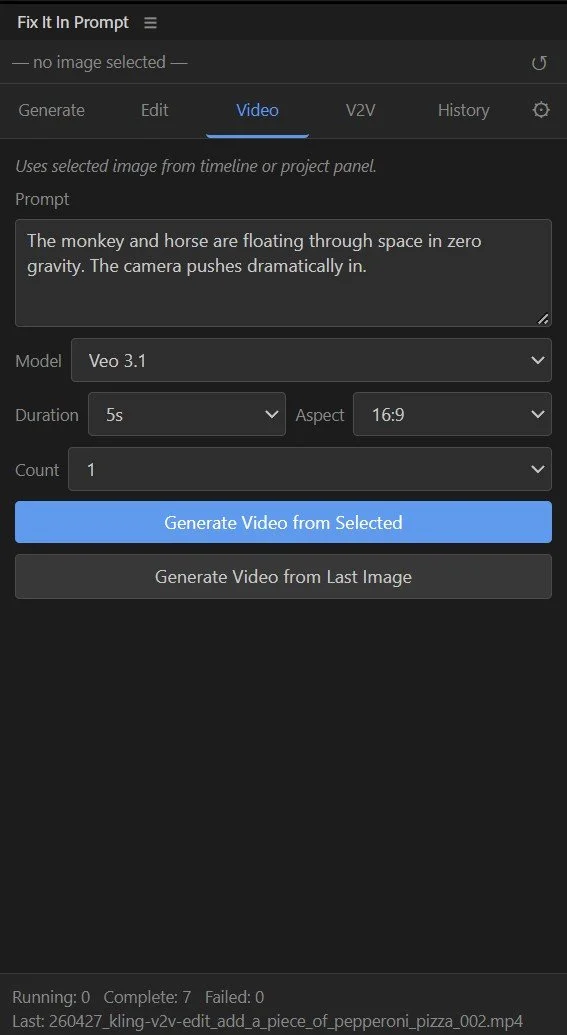

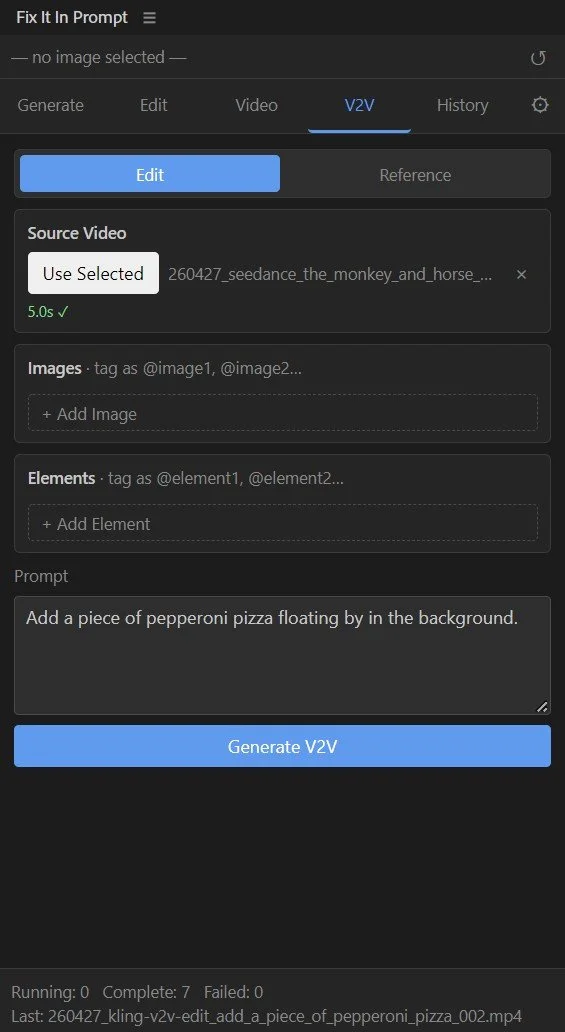

“Fix It In Prompt” - Adobe Premiere Pro Plugin

Generate AI Video and Images directly in Adobe Premiere Pro!!! With an easy to use panel interface, this plugin let’s you skip the hassle of external platforms and have your clips and images appear right on your timeline.

It felt like an obvious need. Why go to external platforms like Krea, Flora, or Weavy when you can generate directly in the software you are editing in? (Though there is definitely a time and place for those tools).

This tool covers image generation across a range of AI models and AI image editing using Google Nano Banana 2. It also generates video using models like Seedance 2.0, Kling 3 and Veo 3.1, and allows for video to video editing using Kling o3. It is built using the fal.ai API allowing access to multiple models.

Planning to upload this to github, but have not gotten to it yet! Will update here soon!

Tethered AI Photography

What sets this prototype apart is not the AI image generation piece (that is ubiquitous at this point), but that it creates a system allowing for AI-mediation in a realtime tethered photography workflow. So you shoot an image live with the camera, and a few seconds later, the AI-mediated image appears on your screen based on your input prompt.

This prototype adds a layer of AI mediation to professional photo capture. While shooting live, the photographer and clients can use AI to composite the product or subject into entirely new environments on the spot—updating the prompt mid-shoot to effectively change “location.” The photographer retains control over composition, while AI expands what’s possible. This allows for more granular control, as well as a more professional client-focused workflow that can be executed live on-set just like a traditional shoot.

Prototype: Shot Caller - App for Professional AI Video Pre Production

I made Shot Caller because I needed a better way to handle AI video pre-production in a professional workflow.

Most AI video tools are great at generating clips, but the process of actually developing a coherent set of visuals before generation still feels scattered. In practice, a lot of AI video workflows depend on still image inputs to maintain consistency across characters, wardrobe, products, and shot design. I wanted a workspace that reflected that reality and made it easier to build a project in a way that feels closer to real pre-pro.

Shot Caller walks through casting, wardrobe, product, shot composition, shot editing, and final storyboard assembly inside one organized system. Built on Google’s Nano Banana image editor, it’s designed to help create, refine, organize, and download the still images needed to generate shots in the AI video model of your choice.

It’s basically the tool I wanted to have: a place to build AI video projects with more structure, more clarity, and a cleaner path to client approvals before moving into generation.

Prototype: AI Copywriter Council

I was inspired to do this prototype by Andrej Karpathy’s LLM council project on GitHub. His project essentially queries 4 major LLMs (ChatGPT, Claude, Gemini, Grok) and sort of has them check each other’s work to ensure an optimal and not hallucinated outcome.

Where my idea diverges is: you don’t get a single output. You are essentially chatting with all 4 LLMs at the same time, and it is specifically geared as a copywriting tool for in-house copywriters at brands. Maybe you have a bunch of lines you love and want to generate some variations? Maybe you want some inspo? Maybe you are doing A/B testing and want to maximize your possibilities? Then this AI copywriting tool might be for you!

Custom AI Model Training - Matching Illustration Style

In collaboration with my colleague, Stephen Halker, (who is a highly talented illustrator), we set out to compose an informal data set of his drawings and use it to train a custom model on their specific illustration style. For model training, we used Adobe Firefly. I was quite impressed with the results. Below you can see samples from the original data set and then AI image generations we created after the model training was complete.

Training Images

Generated Images From the Custom Model

The model did a fairly amazing job of nailing the flat 2-dimensional style, the color palette and the general tone of the training images. And it didn’t hit on every generation, but the hit rate was high enough to be noteworthy. But even more than that, what impressed me was the model’s ability to extrapolate the style to variations on the original dataset. As you can see below, we started to test how far we could take it before it would lose its way.

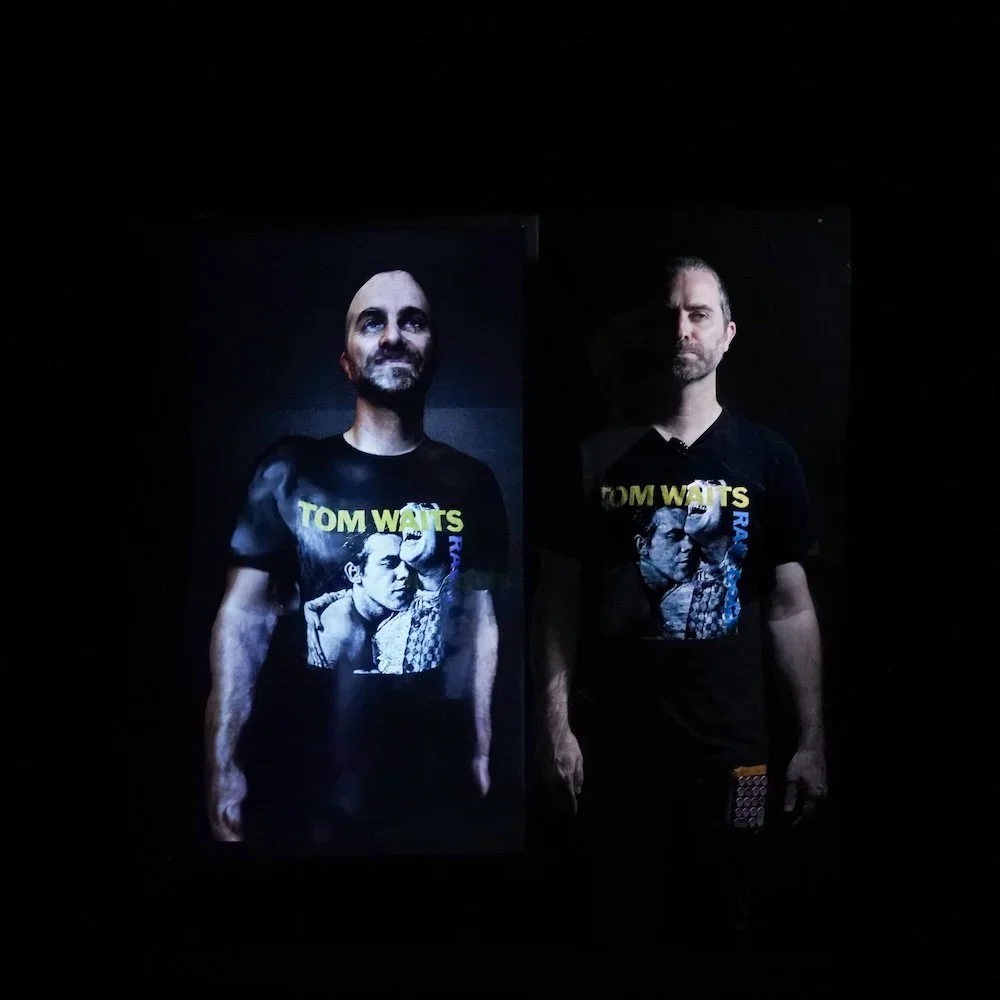

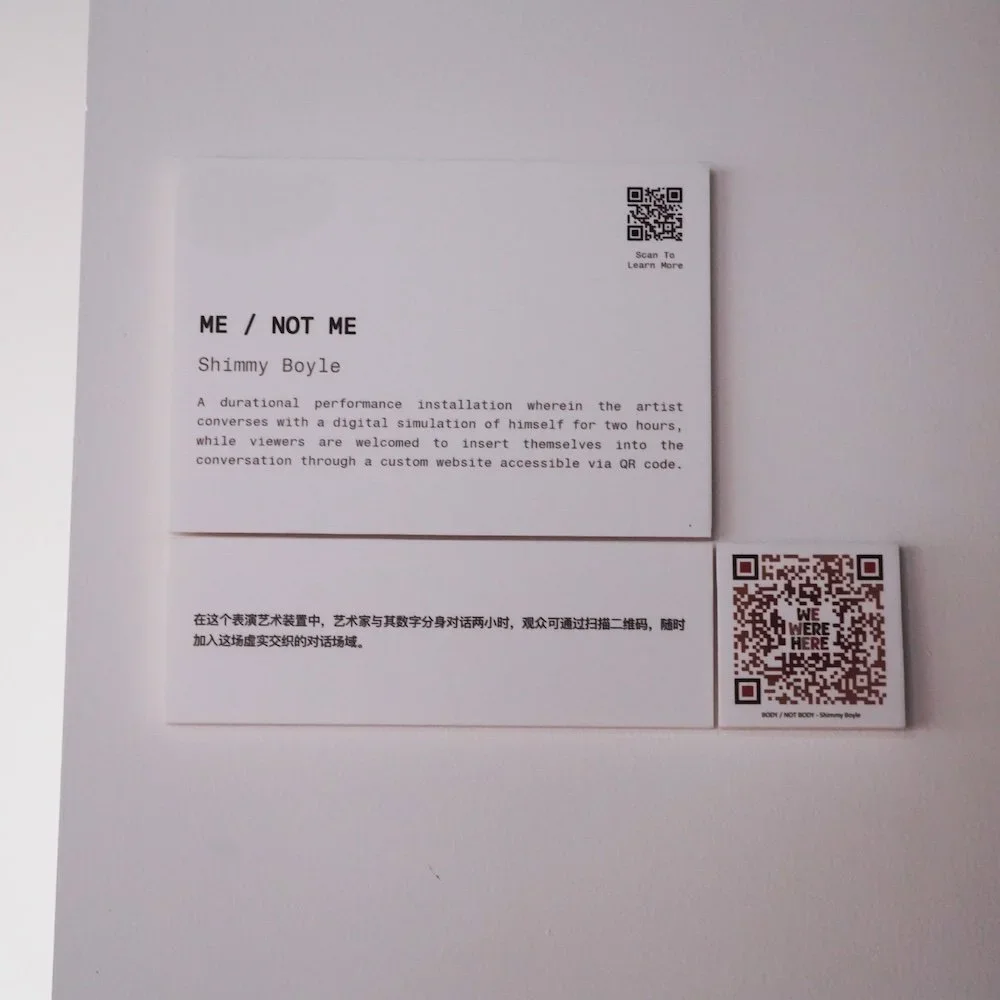

BODY / NOT BODY: A Simulation of Self

A durational performance installation that uses a fine-tuned Large Language Model, voice cloning, and a digital avatar to create a simulation of myself which can be conversed with in realtime. This work explores digital self-representation, post-human embodiment, and the boundaries of authorship in an age of generative media.

Endless Story: A Spoken AI Choose Your Own Adventure

Designed specifically for my 5-year old daughter, Frances, this app is a voice-activated, choose-your-own-adventure web app designed for non-reading children. I wanted to experiment with customizing LLMs to achieve unique results beyond simple chatbots and exploring their generative capacity. Built using the ChatGPT API, the prototype generates unique fairytales in real time, always starring “Princess Frances” and a magical sidekick. The project explores how generative AI can support personalized, spoken storytelling experiences for kids, laying the groundwork for future iterations with customization, real-time responsiveness, and narrative structure.

Unicorn Princess Super Adventure - Retro Platform Video Game

A glitter-drenched, 8-bit fever dream where you can’t lose—only win louder. Built in p5-play, this maximalist platformer flips classic video game logic on its head: every object you collect multiplies, your unicorn grows absurdly large, and eventually the world is swallowed in sparkles. Equal parts Lisa Frank and late-capitalist satire, it’s a joyful, purposeless romp designed to disrupt expectations.

Death Is Not the End - Web Experience

A tongue-in-cheek existential scrollytelling experience featuring rotating 3D skulls, smooth elevator jazz, and uncanny AI-generated compliments made using an AI voice clone of myself. Built with Three.js, GSAP, and ElevenLabs, the piece subverts traditional narrative formats by combining WebGL visuals with surreal affirmation—offering a meditation on death, absurdity, and digital intimacy.

Party Time - Web Experience

A chaotic, joyfully nonsensical browser experience built with WebSockets and dripping in ‘90s clip art and MS Paint energy. Designed for collaborative mayhem, users create audiovisual collages in real time—though the real prize lies in catching the elusive bouncing button. If you can hit it, you’ll be rewarded. Probably.

Final Thoughts

These speculative works are more than experiments—they’re proof-of-concept provocations. They inform my approach to commercial and institutional creative work, giving me firsthand knowledge of the tools, affordances, and cultural implications of emerging technology.

By maintaining an R&D practice rooted in performance, embodiment, and code, I stay fluent in the language of innovation—positioning myself to not just adopt the future of creative work, but help shape it.